When trying to improve the world, we can either pursue direct interventions, such as directly helping beings in need and doing activism on their behalf, or we can pursue research on how we can best improve the world, as well as on what improving the world even means in the first place.

Of course, the distinction between direct work and research is not a sharp one. We can, after all, learn a lot about the “how” question by pursuing direct interventions, testing out what works and what does not. Conversely, research publications can effectively function as activism, and may thereby help bring about certain outcomes quite directly, even when such publications do not deliberately try to do either.

But despite these complications, we can still meaningfully distinguish more or less research-oriented efforts to improve the world. My aim here is to defend more research-oriented efforts, and to highlight certain factors that may lead us to underinvest in research and reflection. (Note that I here use the term “research” to cover more than just original research, as it also covers efforts to learn about existing research.)

Contents

- Some examples

- I. Cause Prioritization

- II. Effective Interventions

- III. Core Values

- The steelman case for “doing”

- We can learn a lot by acting

- Direct action can motivate people to keep working to improve the world

- There are obvious problems in the world that are clearly worth addressing

- Certain biases plausibly prevent us from pursuing direct action

- The case for (more) research

- We can learn a lot by acting — but we are arguably most limited by research insights

- Objections: What about “long reflection” and the division of labor?

- Direct action can motivate people — but so can (the importance of) research

- There are obvious problems in the world that are clearly worth addressing — but research is needed to best prioritize and address them

- Certain biases plausibly prevent us from pursuing direct action — but there are also biases pushing us toward too much or premature action

- The Big Neglected Question

- Conclusion

- Acknowledgments

Some examples

Perhaps the best way to give a sense of what I am talking about is by providing a few examples.

I. Cause Prioritization

Say our aim is to reduce suffering. Which concrete aims should we then pursue? Maybe our first inclination is to work to reduce human poverty. But when confronted with the horrors of factory farming, and the much larger number of non-human animals compared to humans, we may conclude that factory farming seems the more pressing issue. However, having turned our gaze to non-human animals, we may soon realize that the scale of factory farming is small compared to the scale of wild-animal suffering, which might in turn be small compared to the potentially astronomical scale of future moral catastrophes.

With so many possible causes one could pursue, it is likely suboptimal to settle on the first one that comes to mind, or to settle on any one of them without having made a significant effort considering where one can make the greatest difference.

II. Effective Interventions

Next, say we have settled on a specific cause, such as ending factory farming. Given this aim, there is a vast range of direct interventions one could pursue, including various forms of activism, lobbying to influence legislation, or working to develop novel foods that can outcompete animal products. Yet it is likely suboptimal to pursue any of these particular interventions without first trying to figure out which of them have the best expected impact. After all, different interventions may differ greatly in terms of their cost-effectiveness, which suggests that it is reasonable to make significant investments into figuring out which interventions are best, rather than to rush into action mode (although the drive to do the latter is understandable and intuitive, given the urgency of the problem).

III. Core Values

Most fundamentally, there is the question of what matters and what is most worth prioritizing at the level of core values. Our values ultimately determine our priorities, which renders clarification of our values a uniquely important and foundational step in any systematic endeavor to improve the world.

For example, is our aim to maximize a net sum of “happiness minus suffering”, or is our aim chiefly to minimize extreme suffering? While there is significant common ground between these respective aims, there are also significant divergences between them, which can matter greatly for our priorities. The first view implies that it would be a net benefit to create a future that contains vast amounts of extreme suffering as long as that future contains a lot of happiness, while the other view would recommend the path of least extreme suffering.

In the absence of serious reflection on our values, there is a high risk that our efforts to improve the world will not only be suboptimal, but even positively harmful relative to the aims that we would endorse most strongly upon reflection. Yet efforts to clarify values are nonetheless extremely neglected — and often completely absent — in endeavors to improve the world.

The steelman case for “doing”

Before making a case for a greater focus on research, it is worth outlining some of the strongest reasons in favor of direct action (e.g. directly helping other beings and doing activism on their behalf).

We can learn a lot by acting

- The pursuit of direct interventions is a great way to learn important lessons that may be difficult to learn by doing pure research or reflection.

- In particular, direct action may give us practical insights that are often more in touch with reality than are the purely theoretical notions that we might come up with in intellectual isolation. And practical insights and skills often cannot be compensated for by purely intellectual insights.

- Direct action often has clearer feedback loops, and may therefore provide a good opportunity to both develop and display useful skills.

Direct action can motivate people to keep working to improve the world

- Research and reflection can be difficult, and it is often hard to tell whether one has made significant progress. In contrast, direct action may offer a clearer indication that one is really doing something to improve the world, and it can be easier to see when one is making progress (e.g. whether people altered their behavior in response to a given intervention, or whether a certain piece of legislation changed or not).

There are obvious problems in the world that are clearly worth addressing

- For example, we do not need to do more research to know that factory farming is bad, and it seems reasonable to think that evidence-based interventions that significantly reduce the number of beings who suffer on factory farms will be net beneficial.

- Likewise, it is probably beneficial to build a healthy movement of people who aim to help others in effective ways, and who reflect on and discuss what “helping others” ideally entails.

Certain biases plausibly prevent us from pursuing direct action

- It seems likely that we have a passivity bias of sorts. After all, it is often convenient to stay in one’s intellectual armchair rather than to get one’s hands dirty with direct work that may fall outside of one’s comfort zone, such as doing street advocacy or running a political campaign.

- There might also be an omission bias at work, whereby we judge an omission to do direct work that prevents harm less harshly than an equivalent commission of harm.

The case for (more) research

I endorse all the arguments outlined above in favor of “doing”. In particular, I think they are good arguments in favor of maintaining a strong element of direct action in our efforts to improve the world. Yet they are less compelling when it comes to establishing the stronger claim that we should focus more on direct action (on the current margin), or that direct action should represent the majority of our altruistic efforts at this point in time. I do not think any of those claims follow from the arguments above.

In general, it seems to me that altruistic endeavors tend to focus far too strongly on direct action while focusing far too little on research. This is hardly a controversial claim, at least not among aspiring effective altruists, who often point out that research on cause prioritization and on the cost-effectiveness of different interventions is important and neglected. Yet it seems to me that even effective altruists tend to underinvest in research, and to jump the gun when it comes to cause selection and direct action, and especially when it comes to the values that they choose to steer by.

A helpful starting point might be to sketch out some responses to the arguments outlined in the previous section, to note why those arguments need not undermine a case for more research.

We can learn a lot by acting — but we are arguably most limited by research insights

The fact that we can learn a lot by acting, and that practical insights and skills often cannot be substituted by pure conceptual knowledge, does not rule out that our potential for beneficial impact might generally be most bottlenecked by conceptual insights.

In particular, clarifying our core values and exploring the best causes and interventions arguably represent the most foundational steps in our endeavors to improve the world, suggesting that they should — at least at the earliest stages of our altruistic endeavors — be given primary importance relative to direct action (even as direct action and the development of practical skills also deserve significant priority, perhaps even more than 20 percent of the collective resources we spend at this point in time).

The case for prioritizing direct action would be more compelling if we had a lot of research that delivered clear recommendations for direct action. But I think there is generally a glaring shortage of such research. Moreover, research on cause prioritization often reveals plausible ways in which direct altruistic actions that seem good at first sight may actually be harmful. Such potential downsides of seemingly good actions constitute a strong and neglected reason to prioritize research more — not to get perpetually stuck in research, but to at least map out the main considerations for and against various actions.

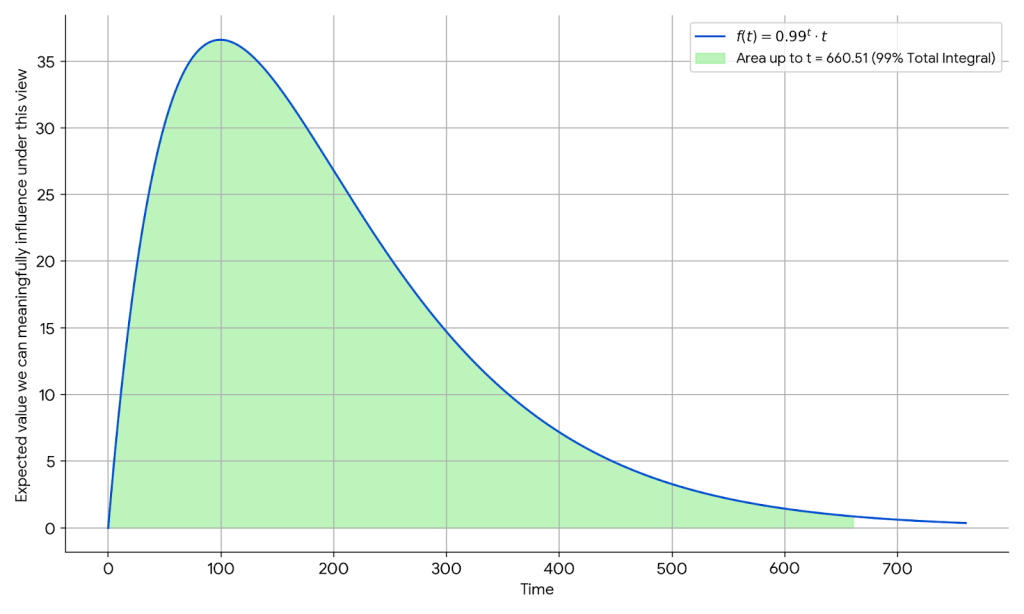

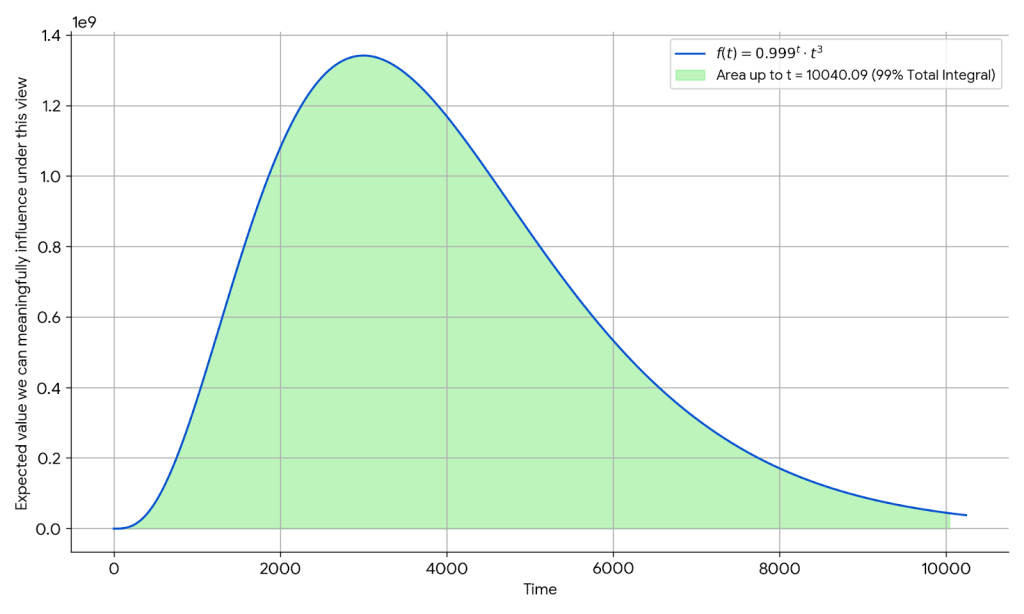

To be more specific, it seems to me that the expected value of our actions can change a lot depending on how deep our network of crucial considerations goes, so much so that adding an extra layer of crucial considerations can flip the expected value of our actions. Inconvenient as it may be, this means that our views on what constitutes the best direct actions have a high risk of being unreliable as long as we have not explored crucial considerations in depth. (Such a risk always exists, of course, yet it seems that it can at least be markedly reduced, and that our estimates can become significantly better informed even with relatively modest research efforts.)

At the level of an individual altruist’s career, it seems warranted to spend at least one year reading about and reflecting on fundamental values, one year learning about the most important cause areas, and one year learning about optimal interventions within those cause areas (ideally in that order, although one may fruitfully explore them in parallel to some extent; and such a full year’s worth of full-time exploration could, of course, be conducted over several years). In an altruistic career spanning 40 years, this would still amount to less than ten percent of one’s work time focused on such basic exploration, and less than three percent focused on exploring values in particular.

A similar argument can be made at a collective level: if we are aiming to have a beneficial influence on the long-term future — say, the next million years — it seems warranted to spend at least a few years focused primarily on what a beneficial influence would entail (i.e. clarifying our views on normative ethics), as well as researching how we can best influence the long-term future before we proceed to spend most of our resources on direct action. And it may be even better to try to encourage more people to pursue such research, ideally creating an entire research project in which a large number of people collaborate to address these questions.

Thus, even if it is ideal to mostly focus on direct action over the entire span of humanity’s future, it seems plausible that we should focus most strongly on advancing research at this point, where relatively little research has been done, and where the explore-exploit tradeoff is likely to favor exploration quite strongly.

Objections: What about “long reflection” and the division of labor?

An objection to this line of reasoning is that heavy investment into reflection is premature, and that our main priority at this point should instead be to secure a condition of “long reflection” — a long period of time in which humanity focuses on reflection rather than action.

Yet this argument is problematic for a number of reasons. First, there are reasons to doubt that a condition of long reflection is feasible or even desirable, given that it would seem to require strong limits to voluntary actions that diverge from the ideal of reflection. To think that we can choose to create a condition of long reflection may be an instance of the illusion of control. Human civilization is likely to develop according to its immediate interests, and seems unlikely to ever be steered via a common process of reflection.

Second, even if we were to secure a condition of long reflection, there is no guarantee that humanity would ultimately be able to reach a sufficient level of agreement regarding the right path forward — after all, it is conceivable that a long reflection could go awfully wrong, and that bad values could win out due to poor execution or malevolent agents hijacking the process.

The limited feasibility of a long reflection suggests that there is no substitute for reflecting now. Failing to clarify and act on our values from this point onward carries a serious risk of pursuing a suboptimal path that we may not be able to reverse later. The resources we spend pursuing a long reflection (which seems unlikely to ever occur) are resources not spent on addressing issues that might be more important and more time-sensitive, such as steering away from worst-case outcomes.

Another objection might be that there is a division of labor case favoring that only some people focus on research, while others, perhaps even most, should focus comparatively little on research. Yet while it seems trivially true that some people should focus more on research than others, this is not necessarily much of a reason against devoting more of our collective attention toward research (on the current margin), nor a reason against each altruist making a significant effort to read up on existing research.

After all, even if only a limited number of altruists should focus primarily on research, it still seems necessary that those who aim to put cutting-edge research into practice also spend time reading that research, which requires a considerable time investment. Indeed, even when one chooses to mostly defer to the judgments of other people, one will still need to make an effort to evaluate which people are most worth deferring to on different issues, followed by an effort to adequately understand what those people’s views and findings entail.

This point also applies to research on values in particular. That is, even if one prioritizes direct action over research on fundamental values, it still seems necessary to spend a significant amount of time reading up on other people’s work on fundamental values if one is to make a qualified judgment regarding which values one will attempt to steer by.

The division of altruistic labor is thus consistent with the recommendation that every dedicated altruist should spend at least a full year reading about and reflecting on fundamental values (just as the division of “ordinary” labor is consistent with everyone spending a certain amount of time on basic education). And one can further argue that the division of altruistic labor, and specialized work on fundamental values in particular, is only fully utilized if most people spend a decent amount of time reading up on and making use of the insights provided by others.

Direct action can motivate people — but so can (the importance of) research

While research work is often challenging and difficult to be motivated to pursue, it is probably a mistake to view our motivation to do research as something that is fixed. There are likely many ways to increase our motivation to pursue research, not least by strongly internalizing the (highly counterintuitive) importance of research.

Moreover, the motivating force provided by direct action might be largely maintained as long as one includes a strong component of direct action in one’s altruistic work (by devoting, say, 25 percent of one’s resources toward direct action).

In any case, reduced individual motivation to pursue research seems unlikely to be a strong reason against devoting a greater priority to research at the level of collective resources and priorities (even if it might play a significant role in many individual cases). This is partly because the average motivation to pursue these respective endeavors seems unlikely to differ greatly — after all, many people will be more motivated to pursue research over direct action — and partly because urgent necessities are worth prioritizing and paying for even if they happen to be less than highly motivating.

By analogy, the cleaning of public toilets is also worth prioritizing and paying for, even if it may not be the most motivating pursuit for those who do it, and the same point arguably applies even more strongly in the case of the most important tasks necessary for achieving altruistic aims such as reducing extreme suffering. Moreover, the fact that altruistic research may be unusually taxing on our motivation (e.g. due to a feeling of “analysis paralysis”) is a reason to think that such taxing research is generally neglected and hence worth pursuing on the margin.

Finally, to the extent one finds direct action more motivating than research, this might constitute a bias in one’s prioritization efforts, even if it represents a relevant data point about one’s personal fit and comparative advantage. And the same point applies in the opposite direction: to the extent that one finds research more motivating, this might make one more biased against the importance of direct action. While personal motivation is an important factor to consider, it is still worth being mindful of the tendency to overprioritize that which we consider fun and inspiring at the expense of that which is most important in impartial terms.

There are obvious problems in the world that are clearly worth addressing — but research is needed to best prioritize and address them

Knowing that there are serious problems in the world, as well as interventions that reduce those problems, does not in itself inform us about which problems are most pressing or which interventions are most effective at addressing them. Both of these aspects — roughly, cause prioritization and estimating the effectiveness of interventions — seem best advanced by research.

A similar point applies to our core values: we cannot meaningfully pursue cause prioritization and evaluations of interventions without first having a reasonably clear view of what matters, and what would constitute a better or worse world. And clarifying our values is arguably also best done through further research rather than through direct action, even as the latter may be helpful as well.

Certain biases plausibly prevent us from pursuing direct action — but there are also biases pushing us toward too much or premature action

The putative “passivity bias” outlined above has a counterpart in the “action bias”, also known as “bias for action” — a tendency toward action even when action makes no difference or is positively harmful. A potential reason behind the action bias relates to signaling: actively doing something provides a clear signal that we are at least making an effort, and hence that we care (even if the effect might ultimately be harmful). By comparison, doing nothing might be interpreted as a sign that we do not care.

There might also be individual psychological benefits explaining the action bias, such as the satisfaction of feeling that one is “really doing something”, as well as a greater feeling of being in control. In contrast, pursuing research on difficult questions can feel unsatisfying, since progress may be relatively slow, and one may not intuitively feel like one is “really doing something”, even if learning additional research insights is in fact the best thing one can do.

Political philosopher Michael Huemer similarly argues that there is a harmful tendency toward too much action in politics. Since most people are uninformed about politics, Huemer argues that most people ought to be passive in politics, as there is otherwise a high risk that they will make things worse through ignorant choices.

Whatever one thinks of the merits of Huemer’s argument in the political context, I think one should not be too quick to dismiss a similar argument when it comes to improving the long-term future — especially considering that action bias seems to be greater when we face increased uncertainty. At the very least, it seems worth endorsing a modified version of the argument that says that we should not be eager to act before we have considered our options carefully.

Furthermore, the fact that we evolved in a condition that was highly action-oriented rather than reflection-oriented, and in which action generally had far more value for our genetic fitness than did systematic research (indeed, the latter was hardly even possible), likewise suggests that we may be inclined to underemphasize research relative to how important it is for optimal impact from an impartial perspective.

This also seems true when it comes to our altruistic drives and behaviors in particular, where we have strong inclinations toward pursuing publicly visible actions that make us appear good and helpful (Hanson, 2015; Simler & Hanson, 2018, ch. 12). In contrast, we seem to have much less of an inclination toward reflecting on our values. Indeed, it seems plausible that we generally have an inclination against questioning our instinctive aims and drives — including our drive to signal altruistic intentions with highly visible actions — as well as an inclination against questioning the values held by our peers. After all, such questioning would likely have been evolutionarily costly in the past, and may still feel socially costly today.

Moreover, it is very unnatural for us to be as agnostic and open-minded as we should ideally be in the face of the massive uncertainty associated with endeavors that seek to have the best impact for all sentient beings (Vinding, 2020, sec. 9.1-9.2). This suggests that we may tend to be overconfident about — and too quick to conclude — that some particular direct action happens to be the optimal path for helping others.

Lastly, while some kind of omission bias plausibly causes us to discount the value of making an active effort to help others, it is not clear whether this bias counts more strongly against direct action than against research efforts aimed at helping others, since omission bias likely works against both types of action (relative to doing nothing). In fact, the omission bias might count more strongly against research, since a failure to do important research may feel like less of a harmful inaction than does a failure to pursue direct actions, whose connection to addressing urgent needs is usually much clearer.

The Big Neglected Question

There is one question that I consider particularly neglected among aspiring altruists — as though it occupies a uniquely impenetrable blindspot. I am tempted to call it “The Big Neglected Question”.

The question, in short, is whether anything can morally outweigh or compensate for extreme suffering. Our answer to this question has profound implications for our priorities. And yet astonishingly few people seem to seriously ponder it, even among dedicated altruists. In my view, reflecting on this question is among the first, most critical steps in any systematic endeavor to improve the world. (I suspect that a key reason this question tends to be shunned is that it seems too dark, and because people may intuitively feel that it fundamentally questions all positive and meaning-giving aspects of life — although it arguably does not, as even a negative answer to the question above is compatible with personal fulfillment and positive roles and lives.)

More generally, as hinted earlier, it seems to me that reflection on fundamental values is extremely neglected among altruists. Ozzie Gooen argues that many large-scale altruistic projects are pursued without any serious exploration as to whether the projects in question are even a good way to achieve the ultimate (stated) aims of these projects, despite this seeming like a critical first question to ponder.

I would make a similar argument, only one level further down: just as it is worth exploring whether a given project is among the best ways to achieve a given aim before one pursues that project, so it is worth exploring which aims are most worth striving for in the first place. This, it seems to me, is even more neglected than is exploring whether our pet projects represent the best way to achieve our (provisional) aims. There is often a disproportionate amount of focus on impact, and comparatively little focus on what is the most plausible aim of the impact.

Conclusion

In closing, I should again stress that my argument is not that we should only do research and never act — that would clearly be a failure mode, and one that we must also be keen to steer clear of. But my point is that there are good reasons to think that it would be helpful to devote more attention to research in our efforts to improve the world, both on moral and empirical issues — especially at this early point in time.

Acknowledgments

For helpful comments, I thank Teo Ajantaival, Tobias Baumann, and Winston Oswald-Drummond.