I discussed David Pearce’s Abolitionist Project in Chapter 13 of my book on Suffering-Focused Ethics. The chapter is somewhat brief and dense, and its main points could admittedly have been elaborated further and explained more clearly. This post seeks to explore and further explain some of these points.

A good place to start might be to highlight some of the key points of agreement between David Pearce and myself.

- First and most important, we both agree that minimizing suffering should be our overriding moral aim.

- Second, we both agree that we have reason to be skeptical about the possibility of digital sentience — and at the very least to not treat it as a foregone conclusion — which I note from the outset to flag that views on digital sentience are unlikely to account for the key differences in our respective views on how to best reduce suffering.

- Third, we agree that humanity should ideally use biotechnology to abolish suffering throughout the living world, provided this is indeed the best way to minimize suffering.

The following is a summary of some of the main points I made about the Abolitionist Project in my book. There are four main points I would emphasize, none of which are particularly original (at least two of them are made in Brian Tomasik’s Why I Don’t Focus on the Hedonistic Imperative).

I.

Some studies suggest that people who have suffered tend to become more empathetic. This obviously does not imply that the Abolitionist Project is infeasible, but it does give us reason to doubt that abolishing the capacity to suffer in humans should be among our main priorities at this point.

To clarify, this is not a point about what we should do in the ideal, but more a point about where we should currently invest our limited resources, on the margin, to best reduce suffering. If we were to focus on interventions at the level of gene editing, other traits (than our capacity to suffer) seem more promising to focus on, such as increasing dispositions toward compassion. And yet interventions focused on gene editing may themselves not be among the most promising things to focus on in the first place, which leads to the next point.

II.

For even if we grant that the Abolitionist Project should be our chief aim, at least in the medium term, it still seems that the main bottleneck to its completion is found not at the technical level, but rather at the level of humanity’s values and willingness to do what would be required. I believe this is also a point that David and I mostly agree on, as he has likewise hinted, in various places, that the main obstacle to the Abolitionist Project will not be technical, but sociopolitical. This would give us reason to mostly prioritize the sociopolitical level on the margin — especially humanity’s values and willingness to reduce suffering. And the following consideration provides an additional reason in favor of the same conclusion.

III.

The third and most important point relates to the distribution of future (expected) suffering, and how we can best prevent worst-case outcomes. Perhaps the most intuitive way to explain this point is with an analogy to tax revenues: if one were trying to maximize tax revenues, one should focus disproportionately on collecting taxes from the richest people rather than the poorest, simply because that is where most of the money is.

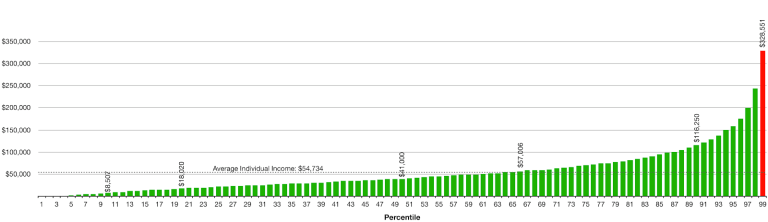

The visual representation of the income distribution in the US in 2019 found below should help make this claim more intuitive.

The point is that something similar plausibly applies to future suffering: in terms of the distribution of future (expected) suffering, it seems reasonable to give disproportionate focus to the prevention of worst-case outcomes, as they contain more suffering (in expectation).

Futures in which the Abolitionist Project is completed, and in which our advocacy for the Abolitionist Project helps bring on its completion, say, a century sooner, are almost by definition not the kinds of future scenarios that contain the most suffering. That is, they are not worst-case futures in which things go very wrong and suffering gets multiplied in an out-of-control fashion.

Put more generally, it seems to me that advocating for the Abolitionist Project is not the best way to address worst-case outcomes, even if we assume that such advocacy has a positive effect in this regard. A more promising focus, it seems to me, is again to increase humanity’s overall willingness and capacity to reduce suffering (the strategy that also seems most promising for advancing the Abolitionist Project itself). And this capacity should ideally be oriented toward the avoidance of very bad outcomes — outcomes that to me seem most likely to stem from bad sociopolitical dynamics.

IV.

Relatedly, a final critical point is that there may be some downsides to framing our goal in terms of abolishing suffering, rather than in terms of minimizing suffering in expectation. One reason is that the former framing may invoke our proportion bias, or what is known in the literature as proportion dominance: our tendency to intuitively care more about helping 10 out of 10 individuals rather than helping 10 out of 100, even though the impact is in fact the same.

Minimizing suffering in expectation would entail abolishing suffering if that were indeed the way to minimize suffering in expectation, but the point is that it might not be. For instance, it could be that the way to reduce the most suffering in expectation is to instead mostly focus on reducing the probability and mitigating the expected badness of worst-case outcomes. And framing our aim in terms of abolishing suffering, rather than the more general and neutral terms of minimizing suffering in expectation, can hide this possibility somewhat. (I say a bit more about this in Section 13.3 in my book; see also this section.)

Moreover, talking about the complete abolition of suffering can leave the broader aim of reducing suffering particularly vulnerable to objections — e.g. the objection that completely abolishing suffering seems risky in a number of ways. In contrast, the aim of reducing intense suffering is much less likely to invite such objections, and is more obviously urgent and worthy of priority. This is another strategic reason to doubt that the abolitionist framing is optimal.

Lastly, it would be quite a coincidence if the actions that maximize the probability of the complete abolition of suffering were also exactly those actions that minimize extreme suffering in expectation; even as these goals are related, they are by no means the same. And hence to the extent that our main goal is to minimize extreme suffering, we should probably frame our objective in these terms rather than in abolitionist terms.

Reasons in favor of prioritizing the Abolitionist Project

To be clear, there are also things to be said in favor of an abolitionist framing. For instance, many people will probably find a focus on the mere alleviation and reduction of suffering to be too negative and insufficiently motivating, leading them to disengage and drop out. Such people may find it much more motivating if the aim of reducing suffering is coupled with an inspiring vision about the complete abolition of suffering and increasingly better states of superhappiness.

As a case in point, I think my own focus on suffering was in large part inspired by the Abolitionist Project and the The Hedonistic Imperative, which gradually, albeit very slowly, eased my optimistic mind into prioritizing suffering. Without this light and inspiring transitional bridge, I may have remained as opposed to suffering-focused views as I was eight years ago, before I encountered David’s work.

Brian Tomasik writes something similar about the influence of these ideas: “David Pearce’s The Hedonistic Imperative was very influential on my life. That book was one of the key factors that led to my focus on suffering as the most important altruistic priority.”

Likewise, informing people about technologies that can effectively reduce or even abolish certain forms of suffering, such as novel gene therapies, may give people hope that we can do something to reduce suffering, and thus help motivate action to this end.

But I think the two reasons cited above count more as reasons to include an abolitionist perspective in our “communication portfolio”, as opposed to making it our main focus — not least in light of the four considerations mentioned above that count against the abolitionist framing and focus.

A critical question

The following question may capture the main difference between David’s view and my own.

In previous conversations, David and I have clarified that we both accept that the avoidance of worst-case outcomes is, plausibly, the main priority for reducing suffering in expectation.

This premise, together with our shared moral outlook, seems to recommend a strong focus on minimizing the risk of worst-case outcomes. The critical question is thus: What reasons do we have to think that prioritizing and promoting the Abolitionist Project is the single best way, or even among the best ways, to address worst-case outcomes?

As noted above, I think there are good reasons to doubt that advocating the Abolitionist Project is among the most promising strategies to this end (say, among the top 10 causes to pursue), even if we grant that it has positive effects overall, including on worst-case outcomes in particular.

Possible responses

Analogy to smallpox

A way to respond may be to invoke the example of smallpox: Eradicating smallpox was plausibly the best way to minimize the risk of “astronomical smallpox”, as opposed to focusing on other, indirect measures. So why should the same not be true in the case of suffering?

I think this is an interesting line of argument, but I think the case of smallpox is disanalogous in at least a couple of ways. First, smallpox is in a sense a much simpler and more circumscribed phenomenon than is suffering. In part for this reason, the eradication of smallpox was much easier than the abolition of suffering would be. As an infectious disease, smallpox, unlike suffering, has not evolved to serve any functional role in animals. It could thus not only be eradicated more easily, but also without unintended effects on, say, the function of the human mind.

Second, if we were primarily concerned about not spreading smallpox to space, and minimizing “smallpox-risks” in general, I think it is indeed plausible that the short-term eradication of smallpox would not be the ideal thing to prioritize with marginal resources. (Again, it is important to here distinguish what humanity at large should ideally do versus what the, say, 1,000 most dedicated suffering reducers should do with most of their resources, on the margin, in our imperfect world.)

One reason such a short-term focus may be suboptimal is that the short-term eradication of smallpox is already — or would already be, if it still existed — prioritized by mainstream organizations and governments around the world, and hence additional marginal resources would likely have a rather limited counterfactual impact to this end. Work to minimize the risk of spreading life forms vulnerable to smallpox is far more neglected, and hence does seem a fairly reasonable priority from a “smallpox-risk minimizing” perspective.

Sources of unwillingness

Another response may be to argue that humanity’s unwillingness to reduce suffering derives mostly from the sense that the problem of suffering is intractable, and hence the best way to increase our willingness to alleviate and prevent suffering is to set out technical blueprints for its prevention. In David’s words, “we can have a serious ethical debate about the future of sentience only once we appreciate what is — and what isn’t — technically feasible.”

I think there is something to be said in favor of this argument, as noted above in the section on reasons to favor the Abolitionist Project. Yet unfortunately, my sense is that humanity’s unwillingness to reduce suffering does not primarily stem from a sense that the problem is too vast and intractable. Sadly, it seems to me that most people give relatively little thought to the urgency of (others’) suffering, especially when it comes to the suffering of non-human beings. As David notes, factory farming can be said to be “the greatest source of severe and readily avoidable suffering in the world today”. Ending this enormous source of suffering is clearly tractable at a collective level. Yet most people still actively contribute to it rather than work against it, despite its solution being technically straightforward.

What is the best way to motivate humanity to prevent suffering?

This is an empirical question. But I would be surprised if setting out abolitionist blueprints turned out to be the single best strategy. Other candidates that seem more promising to me include informing people about horrific examples of suffering, as well as presenting reasoned arguments in favor of prioritizing the prevention of suffering.

To clarify, I am not arguing for any efforts to conserve suffering. The issue here is rather about what we should prioritize with our limited resources. The following analogy may help clarify my view: When animal advocates argue in favor of prioritizing the suffering of farm animals or wild animals rather than, say, the suffering of companion animals, they are not thereby urging us to conserve let alone increase the suffering of companion animals. The argument is rather that our limited resources seem to reduce more suffering if we spend them on these other things, even as we grant that it is a very good thing to reduce the suffering of companion animals.

In terms of how we rank the cost-effectiveness of different causes and interventions (cf. this distribution), I would still consider abolitionist advocacy to be quite beneficial all things considered, and probably significantly better than the vast majority of activities that we could pursue. But I would not quite rank it at the tail-end of the cost-effectiveness distribution, for some of the reasons outlined above.